Trunk Flaky Tests detects flaky tests by analyzing test results from your CI runs. Setup requires configuring test result output and CI upload integration.Documentation Index

Fetch the complete documentation index at: https://docs.trunk.io/llms.txt

Use this file to discover all available pages before exploring further.

Prerequisites

- Account at app.trunk.io

- Ability to modify repository CI configuration and add secrets

- Tests running in CI on both PRs and stable branches (e.g., main, master, or develop)

Step 1: Ensure JUnit XML output

Trunk ingests test results in JUnit XML format. If your CI already generates JUnit XML, note the file paths and skip to Step 2. If not, configure your test frameworks to output JUnit XML:- See Test Frameworks for framework-specific configuration

- Supports multiple frameworks simultaneously

Step 2: Configure CI uploads

Add test result uploads to all CI jobs that run tests.- See CI Providers for integration instructions

- Configure uploads in jobs that run on:

- Pull request branches

- Stable branches (

main,master,develop, etc.) - Merge queue branches (if applicable)

Trunk automatically recognizes

main, master, and develop as stable branches. If your primary branch uses a different name, configure uploads from that branch the same way and Trunk will classify it correctly.Step 3: Verify integration

- Push your changes and trigger a CI run

- Check CI logs for successful upload confirmation

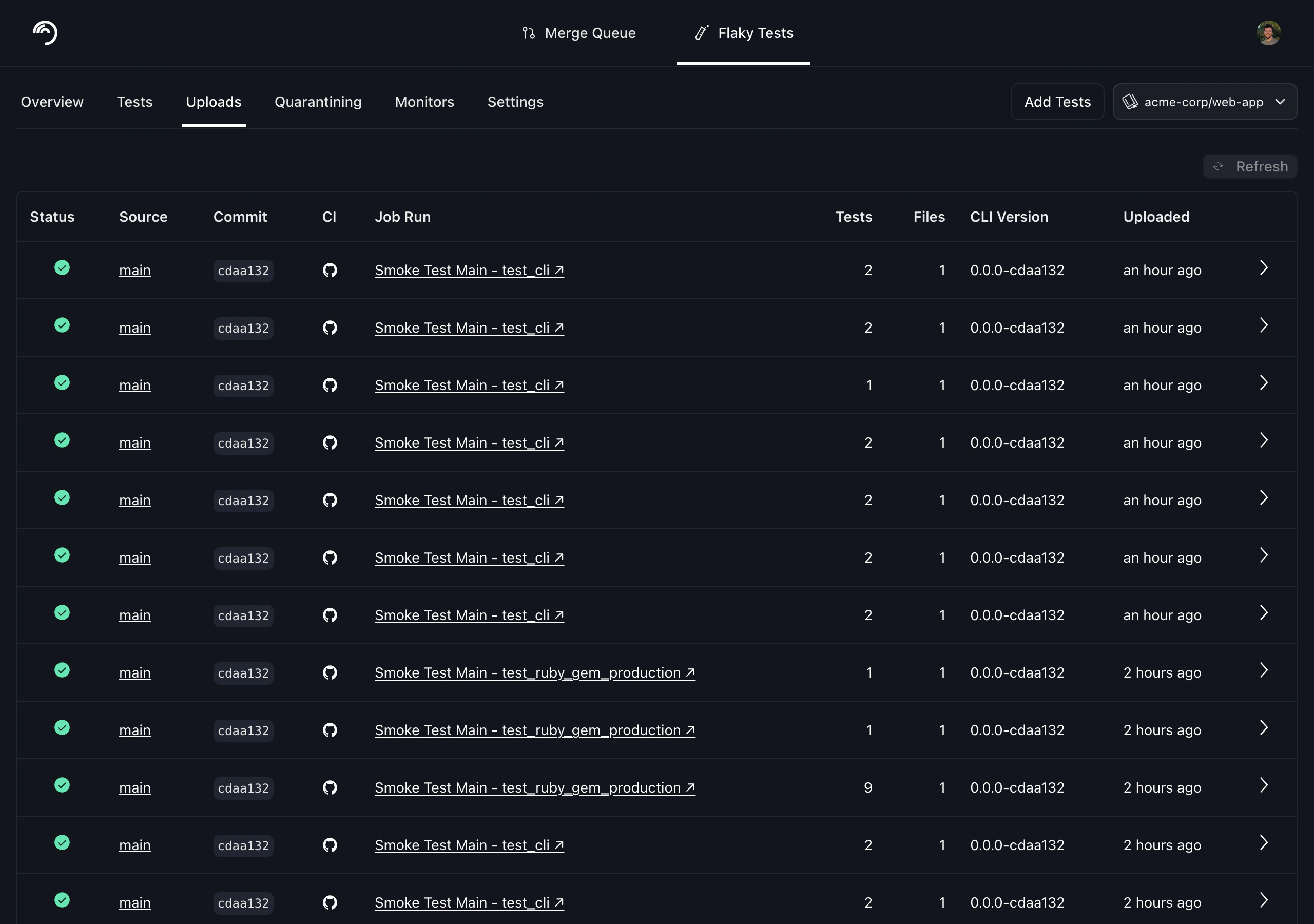

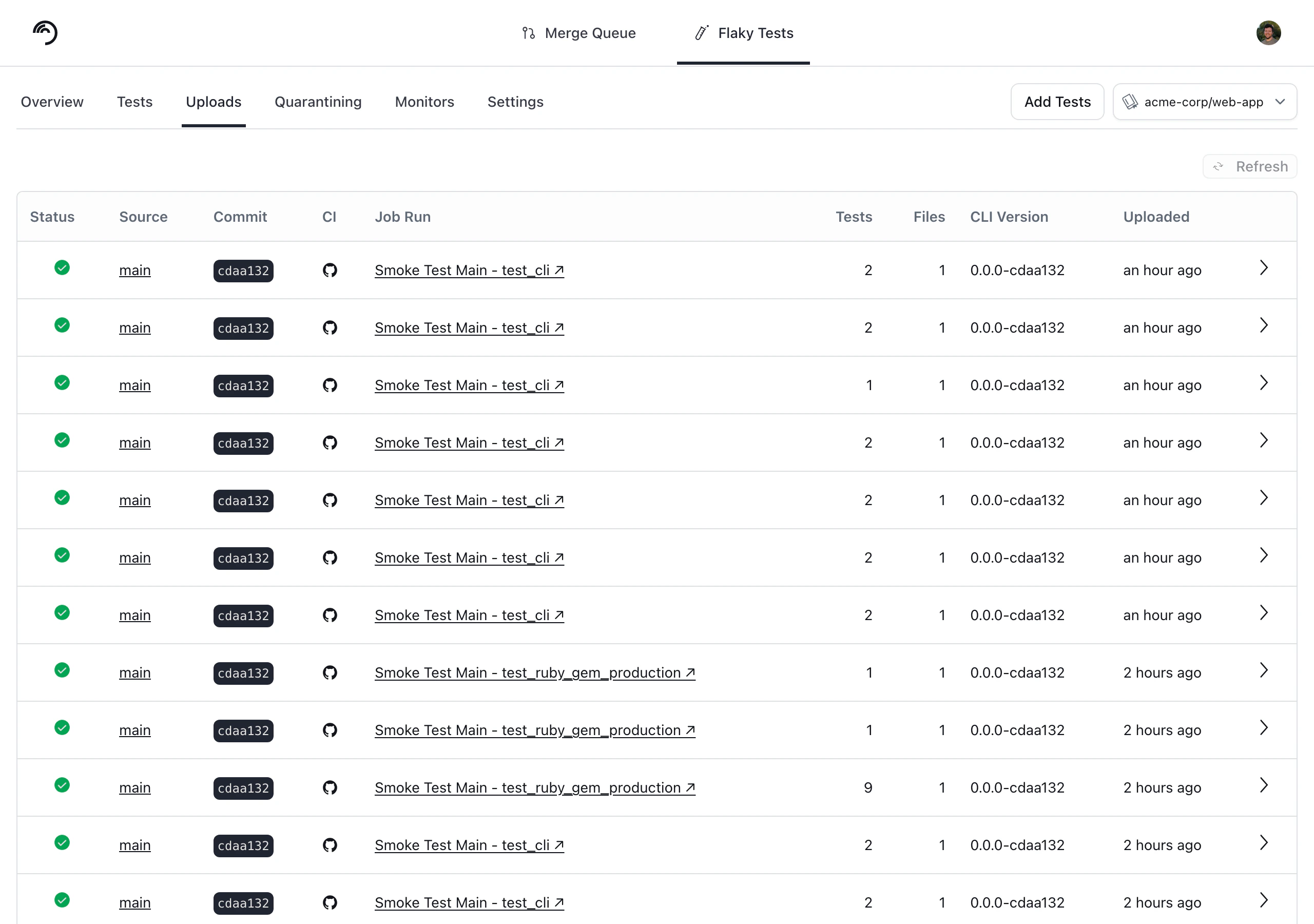

- Results typically appear within a few minutes. Verify uploads appear at app.trunk.io → your repo → Flaky Tests > Uploads

Step 4: Configure flake detection

After uploads are flowing, navigate to your repo → Flaky Tests > Monitors to set up detection. Pass-on-retry is enabled by default and is the recommended baseline for everyone. It catches the most common flakiness pattern — a test that fails and then passes on retry within the same commit — without any configuration needed. Failure rate monitors let you detect flakiness based on failure rate over a rolling time window. How you configure them depends on your CI setup:- If tests must pass before merging to main, set up a failure rate monitor scoped to

mainto catch an elevated failure rate. For example, if you run tests 5 times per day onmain, a 24-hour rolling window with a minimum of 4 runs and a failure threshold of 25% is a reasonable starting point. This gives the monitor enough data before flagging anything. - If you use a merge queue, consider a dedicated monitor scoped to your merge queue branches (e.g.,

trunk-merge/*orgh-readonly-queue/*). Failures here are especially suspicious since the code has already passed PR checks, so a low threshold is appropriate.